Projects

-

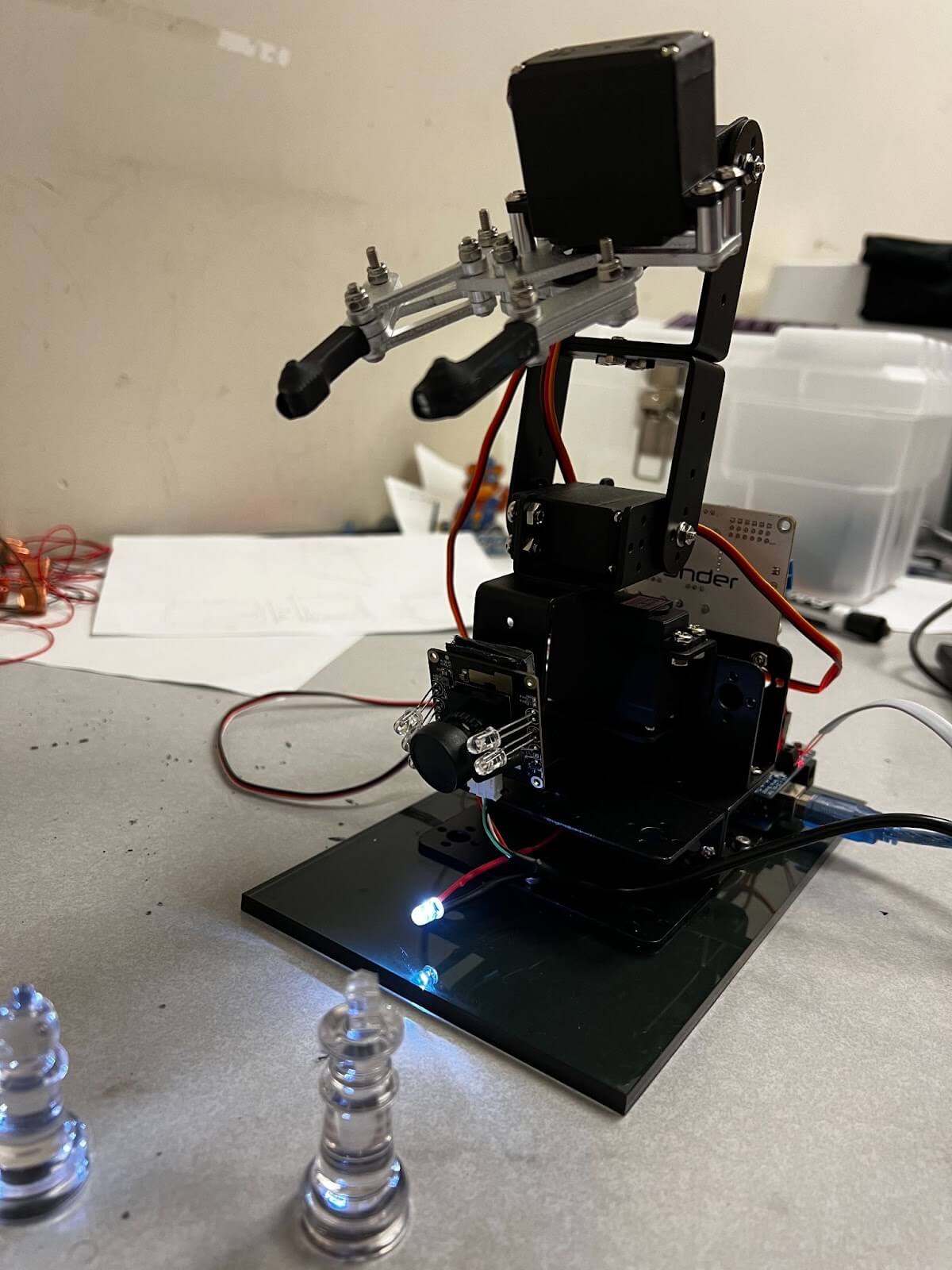

This is final project of Robotics: Kinematics and Dynamics (Fall - 2021, UNL) course. he project teamcontains me, Renick, and Spencer. This project aims to implement a vision guided robotarm which pick based on input and place it in other locations. We have used chess piecesas objects and machine learning for the vision processing.

Keywords - Deep Learning, Robot Arm, Vision guided motion

Technologies - Python, Tensorflow, Arduino.

-

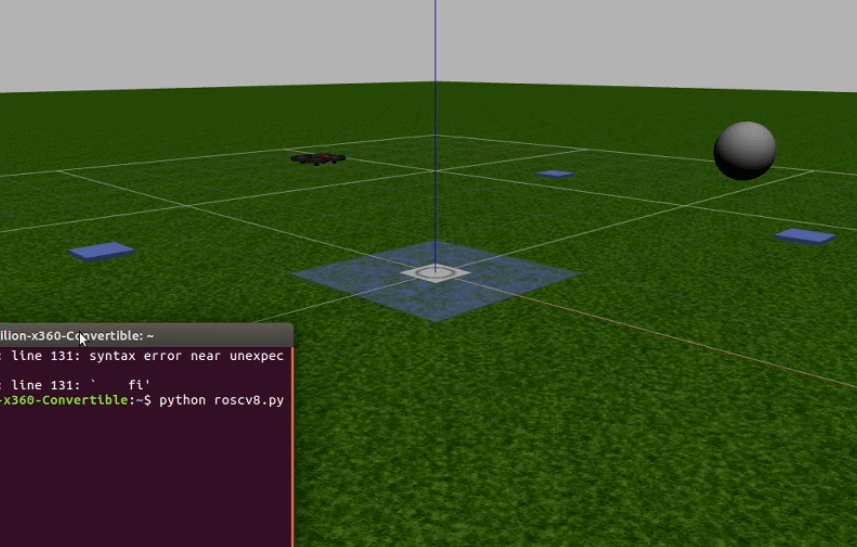

This is final project of Robotics: Unmanned Aerial Systems (Fall - 2020, UNL) course. The project team contains me, Paul, and Ji Young. This project aims to implement a seeker drone which identifies the snitch (a golden ball) in the world, and catches it. The built model is Parrot Bebop Drone.

The proposed method is verified in the Gazebo Simulator with Parrot Bebop drone.

Keywords - UAV, Gazebo, Parrot Bebop Drone

Technologies - Python, C++, OpenCV, ROS, Gazebo

-

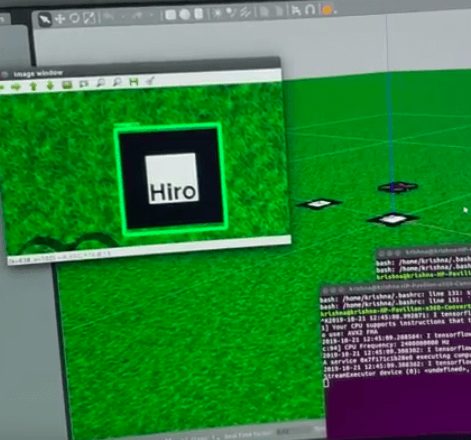

This is final project of Cyber Physical Systems (Fall - 2019, UNL) course. This project aims to identify the two types of markes through deep learning based object detection. The built model is attached to Parrot Drones camera to track the marker.

The proposed method is tested in the simulation using TUM simulator, proceeding for real drone testing.

Keywords - UAV, TUM Simulator, Parrot AR Drone, Object Detection, Deep Learning

Technologies - Python, OpenCV, ROS, Gazebo, Tensorflow

-

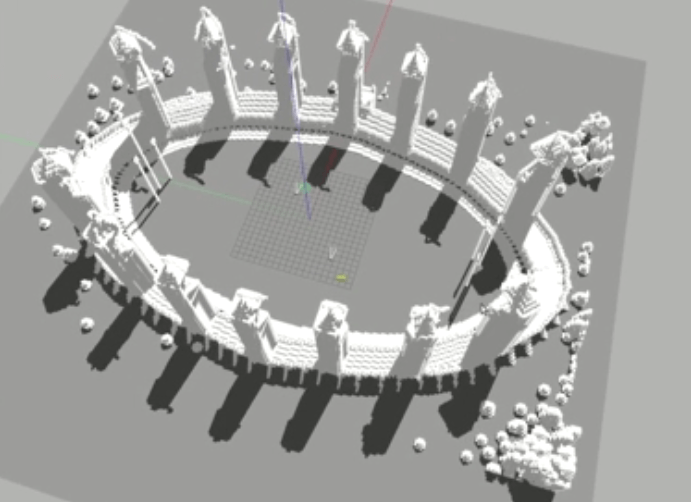

This is final project of Robotics Today (Fall - 2019, UNL) course. This project addresses to land multiple drones using single fiducial marker. Drones lands on the surrounding area of fiducial marker such that other drones still use the marker to track the landing spot. Contour identification is used to detect the marker, and PD controller is used to control the drone.

The proposed method is tested in the simulation using TUM simulator, proceeding for real drone testing.

Keywords - UAV, TUM Simulator, Parrot AR Drone

Technologies - Python, OpenCV, ROS, Gazebo

Simulation result can be found below.

-

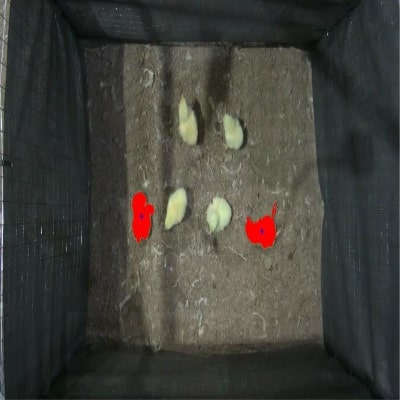

This experiment is about to identifying the dead chickens in the commerical broiler house using image processing. This is a colloborative project of Agriculture Department and Computer Science department. Moniterd by Dr. Zhao and Dr. Zhang. I have worked for implementing the computer vision sytem to identify the dead chickens. This work is recently published in ASABE. Please look in my publications page for more details abou this project.

We are now working to integrate this computer vision module to robot arm to pick up the dead chickens.

Technologies - OpenCV, Matlab.

-

This project is about to implement an autonomous quadcopter for mail delivery. We (I, Naresh, Anh, and Guoming) have implemented an DIY (Do It Yourself) quadcopter by buying the required parts, and we made it to fly by using the communication between Raspberry pi and a PC. Now we are working for it to fly by itself and through 3d path planning and SLAM.

Keywords - Quadcopter

Hardware - Raspberry Pi, Carbon Fiber frame, 2300KV Brushless Motors, 12A ESC, 6*3 Carbon Fiber Propollers

Technologies - Python, OpenCV

-

An unammned ground vehicle (Which was used by ABE Students to do some experiment in poultry house) was implemented by me. At first it was made as an autonomous robot, later it was converted to RC-based robot due to some experimental conditions.

Hardware - SuperdroidRobotics Chasis, Sabertooth Motor Shield, Arduino, Specktrum DXe Transmitter & Reciver.

Technologies - Arduino.

-

This project is about to aligning the virutal object in real world in a stable manner, which is the fundamental aim of Augmented Reality. I am working under Dr. Swan's supervison for this project.

We are using HoloLens SLAM (Simultaneous Localization and Maping) to align object in a very stable manner in real world, Vuforia tracking for tracking the marker, and Unity for building the application.

Hardware - HoloLens.

Technologies - Unity, C#

-

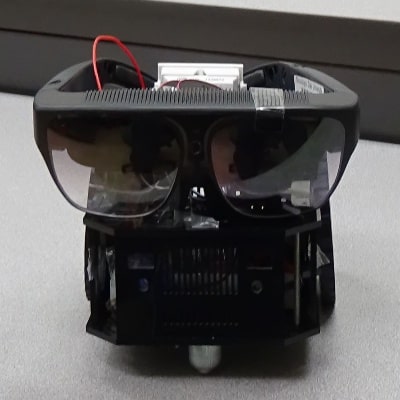

This is final project of AI Robotics (Spring - 2017, MSU) course. In this I implemented a robot which can navigate in the AR world, which contain both real world and vitual world. In this, the robot need to reach the goal position where virtual robot is there by using path planning and with the hlep of sensors. Ultrasonic range finder deals with real world obstacles, ODG R7 (AR glasses) with pixy sensor guides the virutal world. We have used Depth First Search (DFS) and Q - leanring (One of the active reinforcment learnings) for guiding the robot to reach the goal. You can see the results in the provided video there. This paper is accepted to one of the IEEE - Robotics and Automation Society, you can see details about this in my publications page.

Keywords - Robotics, Augmented Realtiy, Path Planning, Reinforcement Learning, Depth First Search.

Hardware - RoboIndia Chasis, CMU Pixy Sensor, ODG R7 Glasses, Ultrasonic range finders, Arduino UNO.

Technologies - Arduino

You can find the output video files below.

-

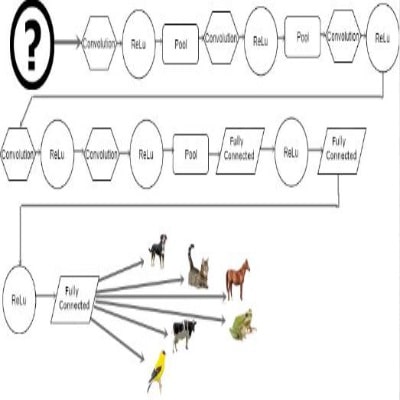

This is our Machine Learning (Fall - 2017, MSU) final project. We (I and Anh) implemented an image classifer to classify seven varities of animals using deep learning.

Keywords - Deep Learning, Convolutiong Neural Networks.

Technloiges - Python, Anaconda, Keras.

-

This is the final project of Visual Data Analysis with R (Spring - 2017, MSU). In this project I have implemented an SVM based classifier to identify whether the passenger surrvied in the titanic or not. After that I made some modifications and made an app which can predict your chance of surviival on different classes with different fare rates.

Keywords - Data Processing, Support Vector Machine (SVM), Kernel-SVM.

Technlogies - R.

-

This our Artificail Intelligence (Fall - 2016, MSU) final project. In which we (I and Naresh) need to implement an angent (Our agent name is 'PeaceAgent') to play the RISK game. A competition was held with all the other agents of the class. Our agent secured 6th position in two-player competition and 2nd position in three-player competition.

Keywords - Startegy.

Technoloiges - Python.

-

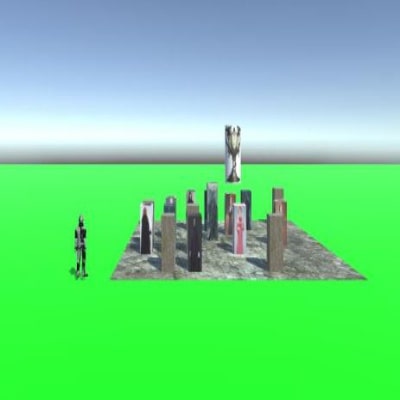

This my Algorithms (Fall - 2016, MSU) course final project. In this project, I have implemented a Q-learning based trined agent to reach Triwizard cup by successfully avoiding the death eaters and obstalces. Python is used to implement the code Unity along with C# used for make the interface.

Keywords - Reinforcement Learning, Q- learning.

Technolgies - Python, Unity, C#.

You can download the sample interface here.

-

We (I and Naveen) have done this project as our final year project of undergradutaion. This project is guided by Ms. Sowmini Devi. In this we have implemetned a movie recommender system using the 'Imputation of missing values' of Machine Learing. A collaborative filtering approach is used to implement this model.

Keywords - Colloborative Filtering, Matrix Factorization, Machine Learning.

Technologies - C, Matlab.

-

For the 'Software Engineering' project we (I along with two of my classmates, Naveen and Graceleena) have implemented a web based blood bank management system, which can integrate the donors and recipents.

Technlogies - HTML, JavaScript, PHP, MySQL